Step 1: Deploy an Instant Cluster

- Open the Instant Clusters page on the Brightnode web interface.

- Click Create Cluster.

- Use the UI to name and configure your Cluster. For this walkthrough, keep Bnode Count at 2 and select the option for 16x H100 SXM GPUs. Keep the Bnode Template at its default setting (Brightnode PyTorch).

- Click Deploy Cluster. You should be redirected to the Instant Clusters page after a few seconds.

Step 2: Clone the PyTorch demo into each Bnode

- Click your cluster to expand the list of Bnodes.

-

Click on a Bnode, for example

CLUSTERNAME-bnode-0, to expand the Bnode. - Click Connect, then click Web Terminal.

-

In the terminal that opens, run this command to clone a basic

main.pyfile into the Bnode’s main directory:

Step 3: Examine the main.py file

Let’s look at the code in ourmain.py file:

main.py

main() function prints the local and global rank for each GPU process (this is also where you can add your own code). LOCAL_RANK is assigned dynamically to each process by PyTorch. All other environment variables are set automatically by Brightnode during deployment.

Step 4: Start the PyTorch process on each Bnode

Run this command in the web terminal of each Bnode to start the PyTorch process:launcher.sh

main.py processes per bnode (one per GPU in the Bnode).

After running the command on the last Bnode, you should see output similar to this:

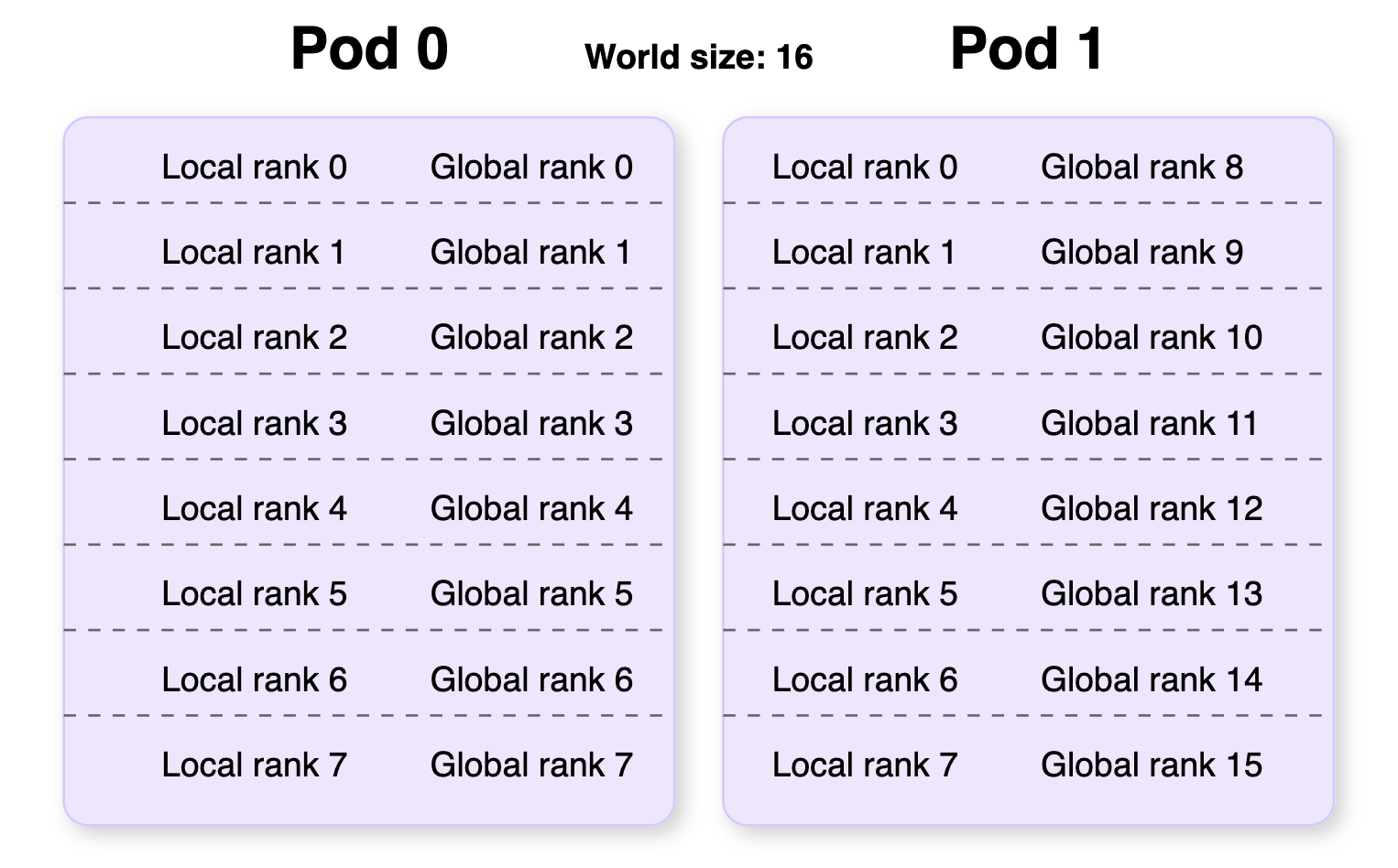

0 to WORLD_SIZE-1 (WORLD_SIZE = the total number of GPUs in the cluster). In our example there are two Bnodes of eight GPUs, so the global rank spans from 0-15. The second number is the local rank, which defines the order of GPUs within a single Bnode (0-7 for this example).

The specific number and order of ranks may be different in your terminal, and the global ranks listed will be different for each Bnode.

This diagram illustrates how local and global ranks are distributed across multiple Bnodes:

Step 5: Clean up

If you no longer need your cluster, make sure you return to the Instant Clusters page and delete your cluster to avoid incurring extra charges.You can monitor your cluster usage and spending using the Billing Explorer at the bottom of the Billing page section under the Cluster tab.

Next steps

Now that you’ve successfully deployed and tested a PyTorch distributed application on an Instant Cluster, you can:- Adapt your own PyTorch code to run on the cluster by modifying the distributed initialization in your scripts.

- Scale your training by adjusting the number of Bnodes in your cluster to handle larger models or datasets.

- Try different frameworks like Axolotl for fine-tuning large language models.

- Optimize performance by experimenting with different distributed training strategies like Data Parallel (DP), Distributed Data Parallel (DDP), or Fully Sharded Data Parallel (FSDP).